Writing

Rethinking the Test Triangle

The test triangle is a useful mental model, but it's taught in a way that leads you astray.

The test triangle is one of the first mental models you encounter when learning how to test software. It tells you to write a lot of unit tests, fewer integration tests, and even fewer end-to-end tests. That’s not wrong. But the way it’s usually taught leaves out some important nuance.

The Classic Triangle

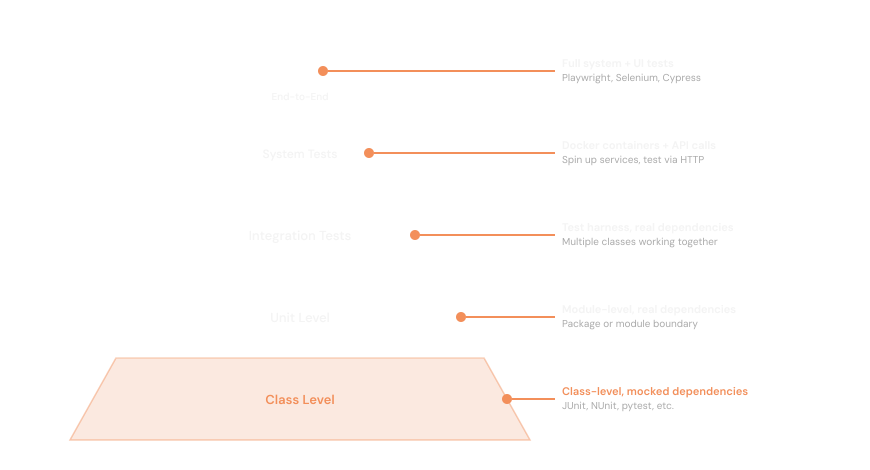

The standard model from bottom to top:

- Unit tests — test individual classes and their logic in isolation

- Integration tests (narrow) — test the interaction between a couple of classes or components

- Integration tests (broad) — test multiple services talking to each other

- System tests — run the whole application as a black box, often driven through the UI

The idea: cheap, fast tests at the bottom; expensive, slow tests at the top. Write more of the cheap ones.

You’ll find this model all over the internet, and as a rule of thumb it’s useful. There are no exact ratios. Nobody can tell you “write three unit tests for every integration test.” But it gives you a proportional sense of where your testing weight should sit.

The problem shows up when you follow the triangle all the way to the bottom.

At that level, the cost of testing starts to compound. A small change to your system can require three times as many changes to the unit tests around it. The tests stop feeling like a safety net and start feeling like a tax. There’s even a perspective you’ll find online suggesting you should just delete your unit tests once the class is written and passing. It’s a sign that the investment isn’t paying off the way it was supposed to.

There’s a pragmatic dimension to this that gets lost. In engineering, you don’t design at the lowest possible level just because you can. A hard drive doesn’t read one byte at a time. It reads in blocks of 8 kilobytes because that’s where the performance is. The granularity you choose has real costs. Testing at the class level is the byte-by-byte approach: technically thorough, practically expensive, and often the wrong unit to be working at.

Where Unit Tests Fall Short

Unit tests are useful when you’re writing code for the first time. They give you fast feedback on a class’s logic. But a well-passing unit test suite is not a guarantee that your system works.

Unit tests test the wrong thing at the wrong granularity.

A pure, dependency-free class is a rarity. Most classes you build have to collaborate with something else.

The best target for class-level unit tests is pure logic: algorithms, transformations, math. Things with no dependencies that just take input and return output.

If you’re testing a tangle of if statements in isolation, your eyes are honestly faster.

The Mock Problem

Once a class has dependencies, you need mocks. Tools like Mockito (Java), Google Mock (C++), and unittest.mock (Python) let you replace real collaborators with fakes so you can test a class in isolation.

Say you’re building a user registration flow. You write a test that verifies: a request comes in, the user gets validated, it gets saved to the database, and a message gets published to a broker. With mocks, you can verify that each of those calls happens, in the right order, with the right arguments.

That’s useful. But here’s the problem: you’re verifying that your class uses an interface the way you think it works. You’re not verifying that you’ve implemented the interface correctly.

JDBC result sets are a complicated interface. You can easily write mocks that use them incorrectly and your tests will still pass. The same is true for REST clients. You can mock a client that makes calls with the wrong headers, wrong body shape, wrong error handling, and every test stays green.

Then you run an actual integration test and find out the real contract. You go back and fix the unit test to match.

Which raises the obvious question: what did the unit test actually get you? You still had to run an integration test to learn the correct answer. The unit test couldn’t surface the bug. That’s not a failure of the developer; it’s a fundamental limitation of testing a class in isolation when the real risk lives at the boundary.

The deeper problem: classes aren’t written in parallel. You write one class, then another. But the actual runtime interaction is invisible until you run them together. What gets passed, what gets returned, what side effects fire — none of that is visible from either class alone.

The Maintenance Problem

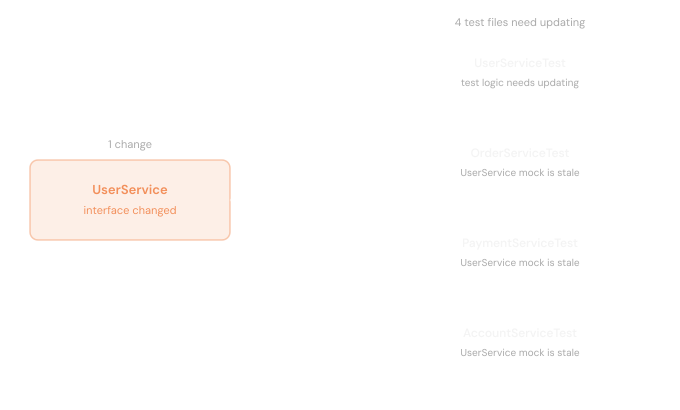

Every class-level unit test you write comes with three maintenance surfaces:

- The test itself — if the class interface changes, the test has to change. Modern refactoring tools make renaming methods straightforward, but any meaningful change to how the interface works requires revisiting the test.

- The assertions — the expected outputs in the test need to reflect the new behavior. That’s the point of the test.

- The mocks — every place that class is mocked now has stale assumptions. You have to hunt down each one and update the expected interactions to match the new interface.

It’s not just the test for the class that changed. It’s every test for every other class that depended on it. Scale that across an entire codebase and a small change to your system can require more modifications to tests than to the actual code.

And a lot of those test changes aren’t validating anything about the change you made. You’re just updating method names and argument lists to get the suite green again. The tests are becoming a maintenance burden rather than a safety net.

The “Unit” in Unit Test Doesn’t Mean Class

Unit tests are almost always taught through the lens of a class. You open a JUnit tutorial, and there’s a test file for a single class. You read a testing chapter in a textbook, and the examples are one class, one test file, one assertion. The framing is so consistent that “unit” and “class” start to feel like synonyms.

But they aren’t. Wikipedia’s definition of unit testing describes it as testing isolated source code to validate expected behavior. It refers to a unit, a component, a module. The word class doesn’t appear.

The “class = unit” interpretation got locked in through the rise of JUnit and Extreme Programming in the late 90s and early 2000s. XP pushed hard for testing at the lowest level possible, and JUnit made class-level tests the easiest thing to write. That combination shaped how an entire generation learned to test, and it stuck.

The result: we now test at such a low level that we’re often not providing much value. A class tested in total isolation, with every collaborator mocked out, tells you very little about whether the system works.

The most valuable tests draw the boundary differently. They treat a group of classes as the unit (a package, a module, a subsystem) and test its behavior from the outside. Bugs live at the boundary, not inside individual classes.

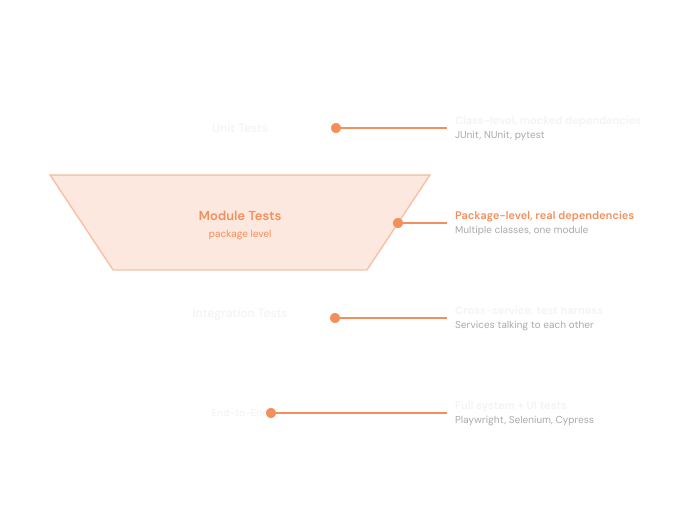

The Case for Package-Level Tests

Given the maintenance overhead of class-level unit tests, there’s a pragmatic case for pushing the boundary up to the package or module level.

If you have five classes that work together, testing them as a single unit tests several things at once — not just the inputs and outputs of the module, but the interactions between the classes inside it. That’s more efficient, not in terms of speed, but in terms of finding the bugs that actually matter.

The maintenance picture changes significantly too. Instead of five unit test classes, each with their own setup, mocks, and assertions, you have one. One set of dependencies to configure. One set of interactions to describe. One set of assertions against the output.

And crucially: you can now refactor inside that module freely. Change how two classes talk to each other, consolidate some logic, rename an internal method. None of that touches your test. The test only cares about what goes in and what comes out.

You’re finally getting what SOLID principles were pointing at all along. The goal of a stable interface with a changeable interior isn’t just a design ideal. Your tests can enforce it and benefit from it. When the boundary is at the module level, the interior is free to change.

Refining Your APIs Through Testing

There’s a side effect of testing at the module level that’s easy to miss: it forces you to define the API.

To write a test against a module, you have to decide what the module actually exposes. What goes in? What comes out? What does a caller need to know? Writing those tests is one of the most effective ways to refine where your boundaries sit. If the setup for a test is awkward, that’s a signal the API is awkward. If two modules are hard to test independently, that’s a signal they’re too entangled.

This feedback loop tends to produce better-drawn lines between packages. You end up with modules that have clear, intentional interfaces and internals that are hidden by design. Other modules only interact through what’s exposed. That limits what they can depend on, reduces coupling, and makes the system easier to change over time.

And once you have a module with a stable interface, you can build test infrastructure around it. Helpers, fixtures, builders — reusable setup code that makes it easy to put the module into any state you need. That investment pays off across every test in the suite. Tests become easier to write, easier to read, and easier to extend as the system grows.

The Diamond, Not the Triangle

The test triangle is a pyramid. Wide at the base, narrow at the top. The implicit message is: build your foundation out of unit tests and everything else is a bonus. That’s the part worth questioning.

If anything, the shape should be a diamond.

At the top point, you have a small number of class-level unit tests, reserved for logic that’s truly isolated. Pure algorithms, critical calculations, anything that has no dependencies and has real complexity worth pinning down.

As you move toward the widest part of the diamond, the count grows. This is your module and package-level integration tests, the real workhorse of the suite. You’re testing groups of classes working together, real interactions, real behavior. The majority of your testing investment belongs here.

Past the middle, the diamond narrows again. Broader integration tests — multiple services talking to each other — are valuable but more expensive to set up and maintain, so you write fewer. Then system-level tests at the bottom point, covering only the critical paths through the full application.

The pyramid scheme sold you on building from the bottom up with class-level tests as the foundation. The diamond says: put your weight in the middle, where the interactions are, and let the extremes stay lean.